Edit: SOLVED thanks to r00ty !

Hello, I have this weird issue that my Debian 11 will tell me the root folder is full, while I can only find files for half of the accounted space.

df -h reports 56G while the disk analyser (sudo baobab) only finds 28G.

Anyone ever encountered this? I don’t have anything mounted twice… (Not sure what udev is). Also it does not add up to 100%, it should say 7.2G left not 4.1G

df -h /dev/sda* Filesystem Size Used Avail Use% Mounted on udev 16G 0 16G 0% /dev /dev/sda1 511M 22M 490M 5% /boot/efi /dev/sda2 63G 56G 4.1G 94% / /dev/sda4 852G 386G 423G 48% /home

Edit: my mtab

Edit 2: what Gparted shows

OK, one possibility I can think of. At some point, files may have been created where there is currently a mount point which is hiding folders that are still there, on the root partition.

You can remount just the root partition elsewhere by doing something like

mkdir /mnt/rootonly mount -o bind / /mnt/rootonlyThen use du or similar to see if the numbers more closely resemble the values seen in df. I’m not sure if that graphical tool you used that views the filesystem can see those files hidden this way. So, it’s probably worth checking just to rule it out.

Anyway, if you see bigger numbers in /mnt/rootonly, then check the mount points (like /mnt/rootonly/home and /mnt/rootonly/boot/efi). They should be empty, if not those are likely files/folders that are being hidden by the mounts.

When finished you can unmount the bound folder with

umount /mnt/rootonlyJust an idea that might be worth checking.

This! Thank you, this allowed me to find the culprit! It turns out I had an external disk failure some weeks ago, and a cron rsync job was writing in /mnt/thatdrive. When the externaldrive died rsync created a folder /mnt/thatdrive. Now that I replaced the drive, /mnt was disregarded by the disk analyser, but the folder was still there and indeed hidden by the mount… It is just a coincidence that it was half the size of /

SOLVED!

du -hs /mnt/rootonly/* 0 /mnt/rootonly/bin 275M /mnt/rootonly/boot 12K /mnt/rootonly/dev 28M /mnt/rootonly/etc 4.0K /mnt/rootonly/home 0 /mnt/rootonly/initrd.img 0 /mnt/rootonly/initrd.img.old 0 /mnt/rootonly/lib 0 /mnt/rootonly/lib32 0 /mnt/rootonly/lib64 0 /mnt/rootonly/libx32 16K /mnt/rootonly/lost+found 24K /mnt/rootonly/media 30G /mnt/rootonly/mnt 773M /mnt/rootonly/opt 4.0K /mnt/rootonly/proc 113M /mnt/rootonly/root 4.0K /mnt/rootonly/run 0 /mnt/rootonly/sbin 4.0K /mnt/rootonly/srv 4.0K /mnt/rootonly/sys 272K /mnt/rootonly/tmp 12G /mnt/rootonly/usr 14G /mnt/rootonly/var 0 /mnt/rootonly/vmlinuz 0 /mnt/rootonly/vmlinuz.old

This might help in the future in case you setup a remote mount for backups in the future. Look into using systemd’s automount feature. If the mount suddenly fails then it will instead create an unwritable directory in its place. This prevents your rsync from erroneously writing data to your root partition instead.

Thank you for sharing this tip! Very useful indeed

You can also do the following to prevent unwanted writes when something is not mounted at

/mnt/thatdrive:# make sure it is not mounted, fails if not mounted which is fine umount /mnt/thatdrive # make sure the mountpoint exists mkdir -p /mnt/thatdrive # make the directory immutable, which disallows writing to it (i.e. creating files inside it) chattr +i /mnt/thatdrive # test write to unmounted dir (should fail) touch /mnt/thatdrive/myfile # remount the drive (assumes it’s already listed in fstab) mount /mnt/thatdrive # test write to mounted dir (should succeed) touch /mnt/thatdrive/myfile # cleanup rm /mnt/thatdrive/myfileFrom

man 1 chattr:A file with the ‘i’ attribute cannot be modified: it cannot be deleted or renamed, no link can be created to this file, most of the file’s metadata can not be modified, and the file can not be opened in write mode.

Only the superuser or a process possessing the CAP_LINUX_IMMUTABLE capability can set or clear this attribute.I do this to prevent exactly the situation you’ve encountered. Hope this helps!

I think I would have expected/preferred

mountto complain that you’re trying to mount to a directory that’s not empty. I feel like I’ve run into that error before, is that not a thing?It is with zfs, but I not with regular

mountI think (at least not by default). It might depend on the filesystem though.Ahh, that might be it. I run TrueNAS too. IMO that should be the default behavior, and you should have to explicitly pass a flag if you want mount to silently mask off part of your filesystem. That seems like almost entirely a tool to shoot yourself in the foot.

Aha, glad to hear it.

What FS you’re on? I’m using BTRFS and have the same problem. Simply because disk analyzer doesn’t read snapshots.

Plain ext4

I learned this the hard way when I accidentally deleted my root filesystem last month trying to free up snapshot space.

Btrfs subvolume create /.nodelete

That way, “btrfs sub del” cannot hit your root subvolume without you first removing .nodelete .

Is it possible you have processes holding onto deleted files?

I wouldn’t know? But reboots do nothing

What does `lsblk -f say?

lsblk -f NAME FSTYPE FSVER LABEL UUID FSAVAIL FSUSE% MOUNTPOINT sda

├─sda1 │ vfat FAT32 7DA7-E2FD 489.1M 4% /boot/efi ├─sda2 │ ext4 1.0 c3f96c3b-37d7-439d-abab-103714f5d047 4G 88% / ├─sda3 │ swap 1 swap 1f3122c8-f4ec-4596-a767-2126d8ff90d9

└─sda4 ext4 1.0 e80687d7-1bd3-43f1-b015-351745167ed1 421.7G 45% /home sdb

└─sdb1 ext4 1.0 WD4TB 7618535a-fdb0-411b-820e-cbc8878b6e4b 1.9T 43% /mnt/wwn-0 sdc ext4 1.0 Yotta 3c7eb93b-c2f7-4b13-b901-0d2729a5e3b4 15.7T 8% /mnt/YottaThis one shows 88% full, which seems more like what Gparted shows. But still no clue why 2x28GB is shown

Exactly try to execute lsblk -f and look at your sda (sda2).

Very weird, I can think of some things I might check:

-

It is possible that you have files on disk that don’t have a filename anymore. This can happen when a file gets deleted while it is still opened by some process. Only the filename is gone then, but the file still exist until that process gets killed. If this were the problem, it would go away if you rebooted, since that kills all processes.

-

Maybe it is file system corruption. Try running fsck.

-

Maybe the files are impossible to see for baobab. Like if you had gigs of stuff under (say)

/homeon you root fs, then mount another partition as/homeover that, those files would be hidden behind the mount point. Try booting into a live usb and checking your disk usage from there, when nothing is mounted except root. -

If you have lots and lots of tiny files, that can in theory use up a lot more disk space than the combined size of the files would, because on a lot file systems, small files always use up some minimum amount of space, and each file also has some metadata. This would show up as some discrepancy between

duanddfoutput. For me,df --inodes /shows ~300000 used, or about 10% of total. Each file, directory, symlink etc. should require one inode, I think. -

I have never heard of baobab, maybe that program is buggy or has some caveats. Does

du -shx /give the same results?

It was your 3rd bullet indeed as I explain above. Thanks

-

Wondering : Does duf do a better job than df with btrfs ?

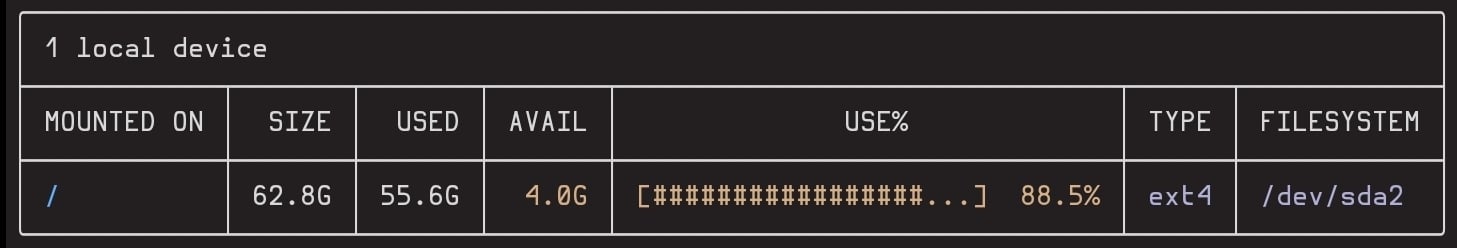

Nope (well better than df for the percentage, same as Gparted and lsblk) - thanks for this utility though

duf /

Maybe setup a live USB and mount it from a live environment to see what it comes up with?

deleted by creator

try ncdu?

sudo ncdu --one-file-system /

This option does not exist but I think -x replaces it (ie do not cross the boundaries of the filesystem, otherwise it does scan /home and /mnt)

Result:

sudo ncdu -x /

The large /var suggests flatpak, and that plays some hardlinking games.

(If you ever need to free up / space, shifting your flatpak usage to a --user repo will help a lot. No there is no handy command for that, it’s a matter of adding and deleting one package at a time.)

mkdir 1 sudo mount /dev/sda2 1 sudo baobab 1 ... sudo umount 1You might have some files hard-linked across directories, or worse (but less likely), there’s a directory hard-link (not supposed to happen) somewhere.

For the uninitiated, a hard-link is when more than one filename points at the same file data on the disk. This is not the same as a symbolic link. Symbolic links are special files that contain a file or directory name and the OS knows to follow them to that destination. (And they can be used to link to directories safely.)

Some programs are not hard-link aware and will count a hard-linked file as many times as it sees it through its different names. Likewise they will count the entire contents of a hard-linked directory through each name.

Programs tend not to be fooled by symlinks because it’s more obvious what’s going on.

Try running a duplicate file finder. Don’t use it to delete anything, but it might help you determine which directories the files are in and maybe why it’s like that.

Also back up everything important and arrange for a

fsckon next boot. If it’s a hard-linked directoryfsckmight be able to fix it safely, but it might choose the wrong name to be the main one and remove the other, breaking something. Or remove both. Or it’s something else entirely, which by “fixing” will stabilise the system but might cause some other form data loss.That’s all unlikely, but it’s nice to have that backup just in case.

Repair inodes or try finding a truckload of dumped logs.